hyper-converged storage

Hyper-converged storage is a software-defined approach to storage management that combines storage, compute, virtualization and sometimes networking technologies in one physical unit that is managed as a single system.

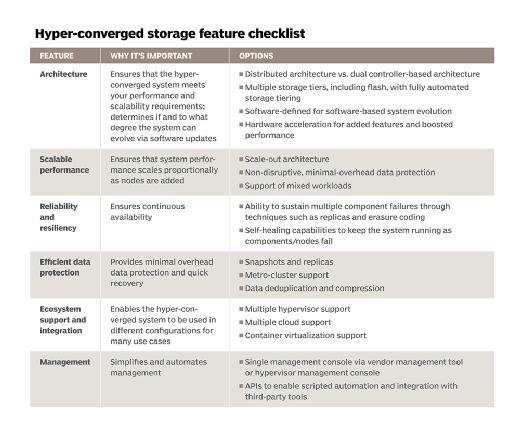

Hyper-converged technologies provide greater control over storage provisioning in a virtual server environment. In addition to providing administrators with single-pane-of-glass management capabilities, hyper-converged storage nodes can be connected and scale out horizontally. This enables administrators to create a distributed storage infrastructure in which direct-attached storage (DAS) components from each physical server are combined to create a logical pool of disk capacity.

Hyper-converged storage is a type of software-defined storage (SDS), because each node has a software layer running virtualization software identical to all other nodes in the cluster. That software layer virtualizes the resources in the individual node and shares them with the other nodes in the cluster. This enables administrators to use resources as a single storage or compute pool.

Hyper-converged storage vs. converged storage

In a hyper-converged storage infrastructure system, each physical box or appliance is a node in the larger cluster of shared resources, including storage. The storage attached to the node is shared with the overall storage pool for the entire cluster, and the storage controller function is built into the individual nodes.

In converged infrastructure systems, which are sometimes referred to simply as converged systems, the compute, network and storage resources are combined in a physical server or appliance, but the storage is directly attached and only available to that physical box.

The benefits and drawbacks of hyper-converged storage

One of the primary advantages of using a hyper-converged infrastructure (HCI) is that the virtualization aspect makes it possible to use commercial off-the-shelf hardware based on inexpensive x86 processors to make up the individual nodes. This means HCI devices can be less expensive if IT administrators build their own, and vendors can pay less for the components of a complete appliance.

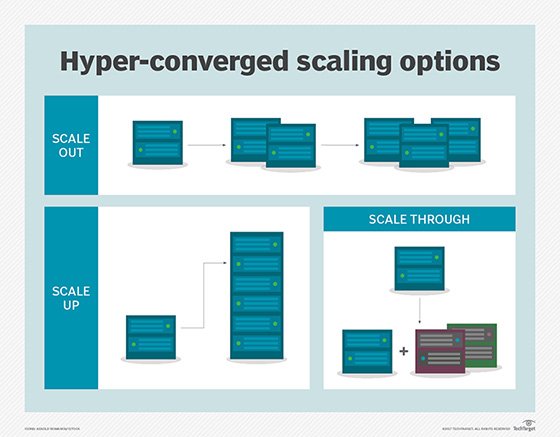

Another advantage is that mores nodes can be added to a cluster for easy application of scale-out storage.

One potential disadvantage is that base HCI nodes include both storage and compute, so a user must add compute even if they only need more capacity, or vice versa. However, many HCI platforms now include storage- or compute-centric nodes to alleviate that problem.

Hyper-converged storage advantages include:

- Appliances can be built from commodity hardware

- Hardware vendors offer turnkey appliances

- Hyper-converged deployments can be easily scaled out by installing extra nodes

Hyper-converged storage disadvantages include:

- Conventional hyper-converged appliances consist of tightly integrated hardware, making it impossible to upgrade compute or storage independently of one another.

- Some vendors only allow deployments to be scaled out by adding nodes. Scaling up -- upgrading the components within a node to increase data storage capacity -- might not be supported.

- Some hyper-converged systems don't allow connectivity to external storage arrays or to a storage area network (SAN).

Use cases for hyper-converged storage

Hyper-converged storage can be used as a general-purpose data storage environment. Even so, there are certain use cases for which hyper-converged systems are especially well suited. Some of the more popular use cases include:

- Public cloud, private cloud or hybrid cloud infrastructure

- Data protection -- backup and disaster recovery

- Virtual desktop infrastructure (VDI)

- Branch office deployments, where server resources are needed on site rather than in the data center

- Server virtualization

Hyper-converged storage vendors and products

Hyper-converged storage systems can be delivered as appliances, providing both the hardware and the software, or as software-only products. Early players and products in the hyper-converged storage market included the Nutanix Virtual Computing Platform and SimpliVity OmniCube. Other hyper-converged storage vendors and products include Maxta Inc.'s Maxta Storage Platform, Nimboxx Atomic Unit hardware, Pivot3 vSTAC, Scale Computing HC3 and VMware Virtual SAN (vSAN).

The Dell EMC VxRail hyper-converged platform combines Dell PowerEdge servers with VMware vSAN HCI software that was part of its 2016 EMC acquisition. Dell EMC XC Series HCI appliances use Nutanix software through an OEM deal along with PowerEdge servers.

HPE acquired hyper-converged pioneer SimpliVity in January 2017 and sells SimpliVity software on HPE ProLiant servers.

The Cisco HyperFlex HCI appliance consists of Unified Computing System (UCS) hardware and HCI software from Springpath Inc., which Cisco acquired in August 2017.

Lenovo also sells several hyper-converged platforms, partnering with Nutanix, VMware and others for software.