converged infrastructure

What is converged infrastructure?

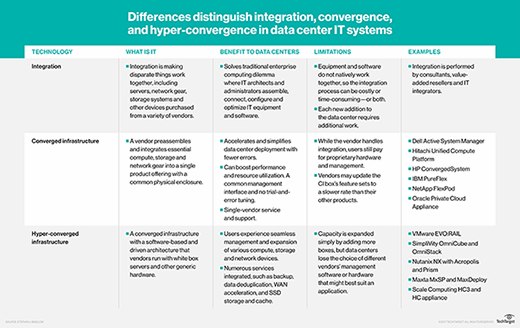

Converged infrastructure, sometimes called converged architecture, is an approach to data center management that packages compute, networking, servers, storage and virtualization tools into a prequalified set of IT hardware. Converged systems include a toolkit of management software.

Converged infrastructure (CI) experienced a long period of popularity as IT organizations sought to address the challenges of traditional locally deployed heterogeneous IT infrastructures, which often suffered interoperability and collective management issues. Rather than multiple IT assets being procured, deployed and managed as independent silos, CI bundles hardware components with management software to orchestrate and provision the resources as a single integrated system.

The goal of converged infrastructure is to reduce complexity in data center management. The principle design factor is to eliminate issues of hardware incompatibility. Ease of deployment of converged infrastructure is appealing to enterprises that write cloud-native applications or host an internal hybrid or private cloud.

Gartner classifies converged infrastructure, along with hyperconverged infrastructure (HCI), within the category of integrated infrastructure systems or integrated stack systems.

Comparing converged infrastructure to traditional data center design

Traditional data center design requires that application servers, backup appliances, hypervisors, network cards and file storage systems be individually configured and linked together. Typically, each component is managed separately by a dedicated IT team. For example, servers might be managed by a server team, storage assets might be managed by the storage team, networks are handled by a network team and so on. This arrangement serves organizations that have petabytes of data across thousands of applications, but management challenges can arise when trying to rationalize the costs or undertake a refresh cycle.

All of this raises issues of procurement, interoperability, management and support. For example, the storage an organization buys comes from a different vendor than the one that supplies their servers and network cards, with each hardware device having different warranty periods and service-level agreements. All of this hardware must be interconnected, validated, managed and supported over time. If a server or some other part of the traditional infrastructure failed or a change was needed, it took vital time for the responsible team to address the issue.

By contrast, converged infrastructure vendors offer branded and supported products in which all the components -- servers, software, storage and switches -- reside natively on qualified hardware subsystems that are already tested and validated to work together and managed through a common software application. Owing to its smaller physical footprint in the data center, converged infrastructure helps to reduce the costs associated with cabling, cooling and power.

Converged infrastructure benefits and drawbacks

Converged architecture is based on a modular design that presents resources as pooled capacity. Each preconfigured device added to the system provides a predictable unit of compute, memory or storage. This visibility into resource consumption lets organizations rapidly scale private cloud infrastructure to support cloud computing, virtualization and IT management at remote branch offices.

One advantage to buying a converged system is the peace of mind that comes with purchasing a vendor's validated platform. A typical converged infrastructure stack is preconfigured to address the needs of a specific workload, such as virtual desktop infrastructure or database applications.

Converged infrastructure products let users independently tune the individual components that comprise the architecture. For example, if more computing power is needed, an IT organization might opt to procure higher-end computing systems within the CI family of validated products -- yet retain the same confidence in the system's interoperability and performance within the CI deployment. This flexibility offers improved management flexibility over other IT architectures. The vendor supplying the converged system provides a single point of contact for maintenance and service issues.

Benefits of converged infrastructure platforms include the following:

- Comprehensive infrastructure. Picking, deploying, managing and maintaining individual devices and systems can be difficult. CI eases much of this trouble by doing the testing and validation for in advance and offering a comprehensive group of gear that's already known to work well together.

- Simpler management. Traditional infrastructures often depend on many management tools which might not always find and manage every device or system. CI provides a single pane of glass management tool that will find and manage all of the devices within the CI platform. This greatly improves configuration management and security.

- Better potential performance. Since devices and systems are already tested and validated to work together, an enterprise can often be confident that a CI platform will offer good performance.

However, there are also limitations as to what an organization can do with converged technology. A user has little latitude to alter the basic converged infrastructure configuration; they can choose from systems within the CI family of offerings, but little else. Separately adding components following the initial installation increases cost and complexity, negating the advantages that make converged infrastructure attractive in the first place. It can be difficult, or even impossible, to add devices and systems that aren't part of the CI menu of products because of the same potential for interoperability and management issues that plagued traditional heterogeneous environments in the first place.

Possible disadvantages of converged infrastructure platforms can include the following:

- Increased costs. Where a traditional heterogeneous IT infrastructure can be composed of cost-effective or niche devices, a CI platform is often far more limited in the approved device options available. This can result in higher costs for devices, systems and software tools from the CI vendor.

- Platform limitations. Since a CI platform has a limited scope of compute, storage, network and other hardware, a given CI platform might not be suitable for all workloads or tasks. In addition, applications with demanding compute, storage and networking requirements might not yield good operational results on a CI platform. It's important to understand the requirements of each intended workload and confer with the CI vendor to ensure satisfactory workload performance.

- Vendor lock-in. CI poses the risk of vendor lock-in because an enterprise will be dependent on that CI vendor for procurement, installation and ongoing support. The ease and simplicity that CI can offer will often mitigate the increased deployment costs, but it's a factor to consider. Further, the enterprise is dependent on the vendor for help and repairs when failures arise. The CI experience is only as good as the CI vendor.

How to deploy a converged infrastructure

There are various ways to implement converged infrastructure. An organization could use a vendor-tested hardware reference architecture, install a cluster of standalone appliances or take a software-driven, hyperconverged approach.

Build a suitable CI platform. A converged infrastructure reference architecture refers to a set of preconfigured and validated hardware recommendations that pinpoint specific data center workloads. A vendor's reference architecture helps guide enterprises on the optimal deployment and use of the converged infrastructure components.

Buy a CI platform from a vendor. Users can opt to purchase a dedicated appliance as the platform on which to run a converged infrastructure. Using this approach, a vendor provides hardware appliances that consolidate compute, storage, networking and virtualization resources, either sourced directly from the vendor or its partners. Organizations can expand the converged cluster by purchasing additional appliances to achieve horizontal scalability.

Consider an HCI alternative. Hyperconverged infrastructure abstracts compute, networking and storage from the underlying physical hardware, while adding virtualization software features. Hyperconverged products typically concentrate separate compute, storage, network and other devices into a single consolidated appliance. HCI also offers additional functionality for cloud bursting or disaster recovery. Administrators can manage both physical and virtual infrastructures -- whether onsite or in the cloud -- in a federated manner using a single pane of glass.

Converged vs. hyperconverged infrastructure vs. composable infrastructure

Although converged infrastructure and hyperconverged infrastructure are sometimes used interchangeably, the technologies differ slightly in the implementation and range of features.

The HCI market sprang from the concept of converged infrastructure. In a converged infrastructure, the discrete hardware components can be separated and used independently. This component separation isn't supported by HCI platforms, which consolidate all the hardware devices and systems to achieve high levels of system integration.

Hyperconverged infrastructure concentrates hardware into a single dedicated appliance and enables other components to be added by implementing software-defined storage features. For example, HCI supports such data center necessities as backup software, inline data deduplication and compression, replication, snapshots and wide area network optimization. A good way to think of the distinction is that converged infrastructure is based mainly around the supported hardware, whereas HCI combines the hardware with granular data center services.

In general, converged infrastructure products are customized to support the specific application workloads of large-scale enterprises. HCI is geared for small and midrange enterprises that don't require as much customization and is also frequently deployed for specific outcomes. For example, an HCI system might be ideal for remote sites and edge computing tasks, or in a larger data center as a designated private cloud platform.

A related term, composable infrastructure, bears similarities to converged and hyperconverged infrastructure systems. A composable infrastructure separates functional elements of the infrastructure into discrete modules, and then allows any number of those modules to be virtualized and added on-demand to compose the desired infrastructure that best serves the workload. A common management tool discovers and pools all those modules and then allows resources to be provisioned from those pools. The distinction of composable infrastructure is that users can reconfigure the infrastructure as workloads evolve within a data center. In composable infrastructure, an IT administrator doesn't need to be concerned with the physical location of the IT components. Instead, objects expose information via management application programming interfaces (commonly referred to as APIs) to enable automated discovery and delivery of services on demand.

Converged infrastructure vs. cloud computing

Cloud computing has gained enormous popularity because of its seamless, self-service, on-demand characteristics. Such important capabilities depend heavily on underlying technologies, such as virtualization to abstract software from the underlying hardware, as well as automation to streamline many of the tasks needed to provision and manage IT resources.

Traditional heterogeneous infrastructures can be used for private and hybrid cloud deployments, but it requires significant effort on the part of IT staff to build the hardware and software stack needed. Converged infrastructure, and now HCI, could ease the onramp to cloud computing by handling much of the configuration and management right out of the box. This allows IT teams to simply add the cloud software layer and focus on developing cloud services.

Thus, CI and HCI aren't required for private and hybrid clouds, but the benefits they bring can also speed up the businesses' successful adoption of cloud technologies.

Converged infrastructure vendors and products

The concept of convergence focuses on bringing disparate hardware devices and software tools together to provide a seamless, proven, turnkey deployment and operational experience for the enterprise.

The earliest iterations of CI simply involved servers from one vendor, storage subsystems from another, networking gear from yet another and so on until a complete IT infrastructure platform was established. The difference is that this CI system was marketed, sold, deployed, managed and maintained as a single cohesive platform provided through a technology vendor.

As years passed and the concept of CI evolved, product offerings became more tightly integrated and eventually became HCI, which is also sometimes called a data center in a box.

Today, there are numerous IT vendors in the marketplace offering CI-type platforms. However, most offerings are intended primarily for specific systems such as SAP HANA or artificial intelligence implementations, legacy support of existing CI platforms, and newer offerings that focus on HCI product lines. An IT organization would be hard-pressed to find a CI vendor that doesn't possess one or more HCI products in its portfolio. However, numerous vendors continue to provide CI products, including the following:

- Cisco FlexPod.

- Dell PowerEdge FX.

- Dell PowerEdge VRTX.

- Dell EMC Ready Stack.

- Dell VxBlock System 1000.

- Hitachi Unified Compute Platform.

- Hewlett Packard Enterprise (HPE) ConvergedSystem 500 for SAP HANA.

- HPE ConvergedSystem 700.

- IBM CloudBurst.

- IBM PureSystems.

- IBM VersaStack.

- NetApp Ontap AI.

- Oracle Exalogic.

- Oracle SuperCluster.

Considerations when vetting converged, hyperconverged products

The fact that converged and hyperconverged infrastructures are sometimes used synonymously underscores the importance of getting accurate, detailed information from vendors. An organization should ask any vendor under consideration to demonstrate if its product truly is a converged infrastructure. It should also find out if the converged product in question enables network devices, servers and storage systems to run independently of one another -- something an HCI platform typically doesn't support.

As is the case when purchasing a legacy IT architecture, customers will have to deal with some vendor lock-in when purchasing converged infrastructure, although this might not be as daunting as it sounds. For one thing, converged infrastructure is designed as a turnkey platform for rapid implementation, and it uses commodity servers and network gear the organization would need to buy anyway. Having a common hardware and software interface also makes it easier to maintain and manage a converged infrastructure.

But that's not to say vendor lock-in doesn't present risks. An IT organization should request information on the vendor's product cadence and timeline for integrating additional features and functionality. For example, some converged infrastructures are designed with all-flash systems, while others are available only in a hybrid -- flash and traditional disk -- configuration. Determine in advance if a vendor offers enough flexibility to support your organization's growth, and plan accordingly.

Equally important is getting clarity on the longevity of the chosen vendor. This involves planning for the worst-case scenario, such as the vendor going out of business or being acquired and allowing product development to languish.

The future of converged infrastructure

Converged infrastructure as an IT data center technology has largely been supplanted by HCI. In general terms, no current business will invest in a CI platform when HCI platforms can offer even greater levels of integration, performance and management. The ideas behind CI led directly to the development of HCI. Today, CI lives on as a pivotal leap in thinking about IT infrastructure and the importance of striking a balance between IT staff time, infrastructure costs and performance, and business outcomes.

Examine the findings from recent research from TechTarget's Enterprise Strategy Group that suggest that organizations are re-evaluating the role of their on-premises technology investments.